language:

- en

license: apache-2.0

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- loss:MatryoshkaLoss

- loss:MultipleNegativesRankingLoss

datasets:

- sentence-transformers/squad

- sentence-transformers/trivia-qa-triplet

- sentence-transformers/all-nli

- sentence-transformers/pubmedqa

- sentence-transformers/hotpotqa

- sentence-transformers/miracl

- sentence-transformers/mr-tydi

- sentence-transformers/s2orc

- nthakur/swim-ir-monolingual

- sentence-transformers/paq

- tomaarsen/natural-questions-hard-negatives

pipeline_tag: sentence-similarity

library_name: sentence-transformers

metrics:

- cosine_accuracy@1

- cosine_accuracy@3

- cosine_accuracy@5

- cosine_accuracy@10

- cosine_precision@1

- cosine_precision@3

- cosine_precision@5

- cosine_precision@10

- cosine_recall@1

- cosine_recall@3

- cosine_recall@5

- cosine_recall@10

- cosine_ndcg@10

- cosine_mrr@10

- cosine_map@100

model-index:

- name: SSE Retrieval MRL 0.9999

results:

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoClimateFEVER

type: NanoClimateFEVER

metrics:

- type: cosine_accuracy@1

value: 0.2

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.48

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.54

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.68

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.2

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.18

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.128

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.102

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.10166666666666666

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.24166666666666667

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.2733333333333334

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.39233333333333337

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.299751347194741

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.36113492063492053

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.23438514328438953

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoDBPedia

type: NanoDBPedia

metrics:

- type: cosine_accuracy@1

value: 0.66

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.84

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.84

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.9

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.66

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.5666666666666667

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.52

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.44400000000000006

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.07827093153121195

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.16032236337443734

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.20952091065849757

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.29831579691724436

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.5493340697005651

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.7491666666666665

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.4246657246617055

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoFEVER

type: NanoFEVER

metrics:

- type: cosine_accuracy@1

value: 0.46

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.76

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.82

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.92

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.46

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.25333333333333335

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.17199999999999996

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.09599999999999997

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.43666666666666665

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.7166666666666667

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.7866666666666667

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.8866666666666667

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.6808214594769284

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.6318253968253967

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.6105163447649364

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoFiQA2018

type: NanoFiQA2018

metrics:

- type: cosine_accuracy@1

value: 0.32

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.48

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.58

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.62

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.32

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.22666666666666666

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.17600000000000002

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.10200000000000001

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.1861904761904762

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.3212936507936508

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.38946031746031745

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.4546825396825397

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.3743730832469537

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.4197142857142857

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.3162051518688468

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoHotpotQA

type: NanoHotpotQA

metrics:

- type: cosine_accuracy@1

value: 0.64

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.88

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.94

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.96

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.64

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.41999999999999993

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.296

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.15999999999999998

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.32

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.63

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.74

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.8

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.7020829772895696

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.7678571428571429

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.6273248247260853

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoMSMARCO

type: NanoMSMARCO

metrics:

- type: cosine_accuracy@1

value: 0.24

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.46

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.52

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.6

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.24

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.1533333333333333

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.10400000000000001

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.06000000000000001

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.24

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.46

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.52

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.6

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.4132396978554854

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.35374603174603175

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.373289844122511

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoNFCorpus

type: NanoNFCorpus

metrics:

- type: cosine_accuracy@1

value: 0.38

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.56

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.6

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.76

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.38

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.34666666666666673

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.29600000000000004

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.24599999999999997

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.0338546319021278

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.06462469800035843

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.07727799038239798

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.10829423267139048

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.298189605225764

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.48890476190476195

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.10911000304853699

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoNQ

type: NanoNQ

metrics:

- type: cosine_accuracy@1

value: 0.24

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.52

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.62

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.7

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.24

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.1733333333333333

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.124

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.07400000000000001

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.23

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.5

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.6

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.69

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.46521648817123007

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.39922222222222226

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.4028459782678049

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoQuoraRetrieval

type: NanoQuoraRetrieval

metrics:

- type: cosine_accuracy@1

value: 0.86

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.98

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.98

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 1

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.86

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.37999999999999995

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.23599999999999993

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.12399999999999999

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.7706666666666666

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.932

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.9453333333333334

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.9626666666666668

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.9094074101386184

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.9122222222222223

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.8846858964622123

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoSCIDOCS

type: NanoSCIDOCS

metrics:

- type: cosine_accuracy@1

value: 0.46

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.62

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.68

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.76

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.46

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.29333333333333333

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.252

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.162

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.09666666666666668

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.18166666666666664

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.26066666666666666

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.33466666666666667

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.33808831519730853

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.5508571428571427

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.260404942677937

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoArguAna

type: NanoArguAna

metrics:

- type: cosine_accuracy@1

value: 0.14

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.5

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.56

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.7

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.14

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.16666666666666669

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.11200000000000002

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.07

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.14

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.5

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.56

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.7

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.41047352977721935

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.3192777777777777

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.33248820268587403

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoSciFact

type: NanoSciFact

metrics:

- type: cosine_accuracy@1

value: 0.54

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.6

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.66

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.74

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.54

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.21333333333333332

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.14400000000000002

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.08199999999999999

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.505

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.58

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.645

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.735

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.6175889955513287

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.5933015873015873

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.5823752505606269

name: Cosine Map@100

- task:

type: information-retrieval

name: Information Retrieval

dataset:

name: NanoTouche2020

type: NanoTouche2020

metrics:

- type: cosine_accuracy@1

value: 0.6530612244897959

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.9183673469387755

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.9591836734693877

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 1

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.6530612244897959

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.6394557823129251

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.6244897959183674

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.5551020408163265

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.04446978335433603

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.12883713641764533

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.20234901450308018

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.3514245193484443

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.602875180920439

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.7852283770651117

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.4539105214909128

name: Cosine Map@100

- task:

type: nano-beir

name: Nano BEIR

dataset:

name: NanoBEIR mean

type: NanoBEIR_mean

metrics:

- type: cosine_accuracy@1

value: 0.4456200941915227

name: Cosine Accuracy@1

- type: cosine_accuracy@3

value: 0.6614128728414441

name: Cosine Accuracy@3

- type: cosine_accuracy@5

value: 0.7153218210361068

name: Cosine Accuracy@5

- type: cosine_accuracy@10

value: 0.7953846153846154

name: Cosine Accuracy@10

- type: cosine_precision@1

value: 0.4456200941915227

name: Cosine Precision@1

- type: cosine_precision@3

value: 0.30867608581894296

name: Cosine Precision@3

- type: cosine_precision@5

value: 0.24496075353218213

name: Cosine Precision@5

- type: cosine_precision@10

value: 0.17516169544740973

name: Cosine Precision@10

- type: cosine_recall@1

value: 0.2448809607419091

name: Cosine Recall@1

- type: cosine_recall@3

value: 0.416698296045084

name: Cosine Recall@3

- type: cosine_recall@5

value: 0.47766217176956105

name: Cosine Recall@5

- type: cosine_recall@10

value: 0.5626192632271503

name: Cosine Recall@10

- type: cosine_ndcg@10

value: 0.5124186276727808

name: Cosine Ndcg@10

- type: cosine_mrr@10

value: 0.5640352719842516

name: Cosine Mrr@10

- type: cosine_map@100

value: 0.43170829450941384

name: Cosine Map@100

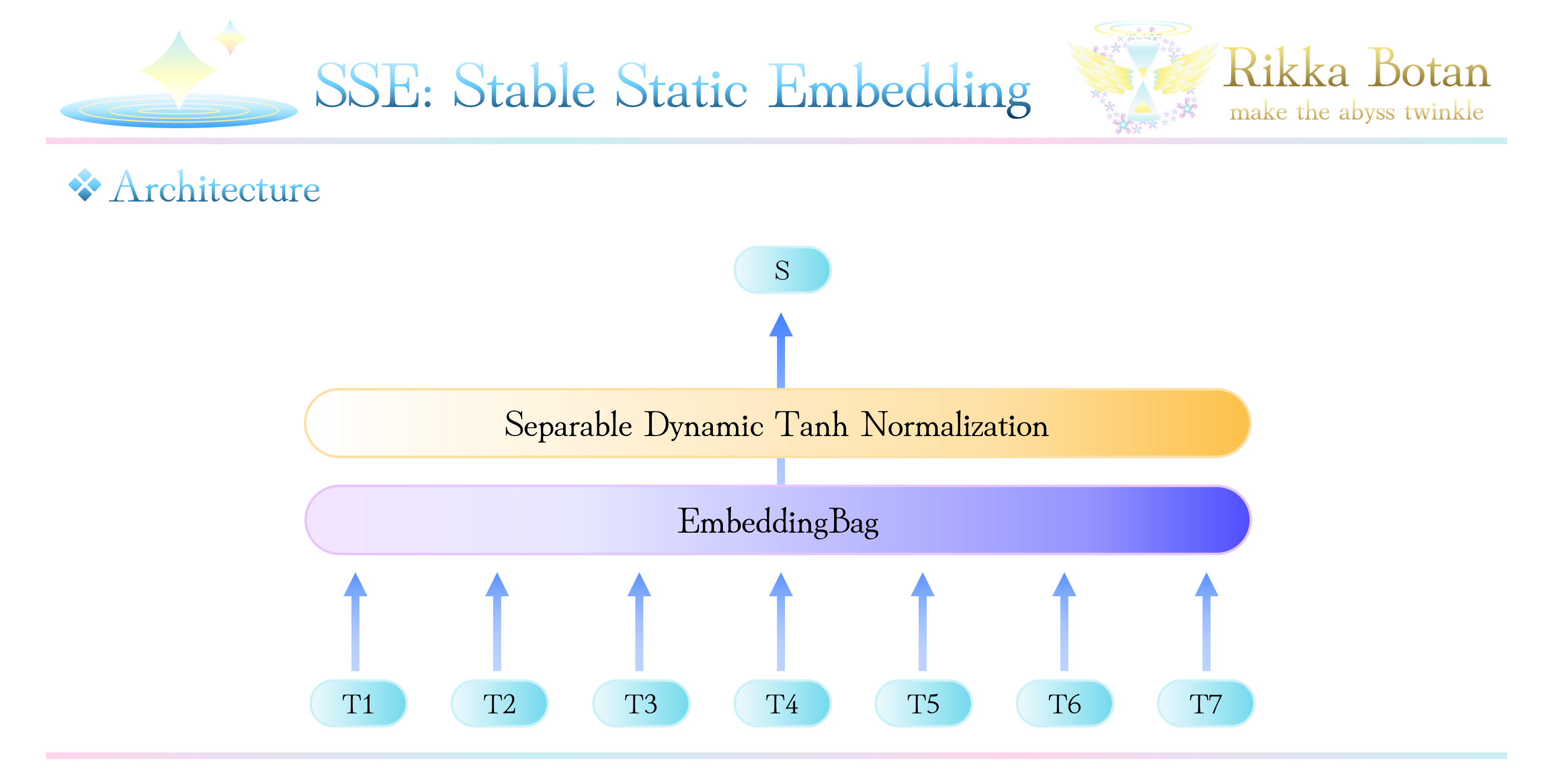

🩵 SSE: Stable Static Embedding for Retrieval MRL 🩵

A lightweight, faster and powerful embedding model

Performance Snapshot

Our SSE model achieves NDCG@10 = 0.5124 on NanoBEIR — slightly outperforming the popular static-retrieval-mrl-en-v1 (0.5032) while using half the dimensions (512 vs 1024)! 💫 Plus, we're ~2× faster in retrieval thanks to our compact 512D embeddings and Separable Dynamic Tanh.

| Model | NanoBEIR NDCG@10 | Dimensions | Parameters | Speed Advantage | License |

|---|---|---|---|---|---|

| SSE Retrieval MRL | 0.5124 ✨ | 512 | ~16M 🪽 | ~2x faster retrieval (ultra-efficient!) | Apache 2.0 |

static-retrieval-mrl-en-v1 |

0.5032 | 1024 | ~33M | baseline | Apache 2.0 |

🩵 Why Choose SSE Retrieval MRL? 🩵

✅ Higher NDCG@10 than all comparable small models (<35M params)

✅ Only ~16M parameters — 27% smaller than MiniLM-L6 (22M) and 52% smaller than BGE-small (33M)

✅ 512D native output — richer than 1024D models, yet half the size of static-retrieval-mrl-en-v1

✅ Matryoshka-ready — smoothly truncate to 256D/128D/64D/32D with graceful degradation

✅ Apache 2.0 licensed — free for commercial & personal use

✅ CPU-optimized — runs faster on edge devices & modest hardware

🩵 Model Details 🩵

| Property | Value |

|---|---|

| Model Type | Sentence Transformer (SSE architecture) |

| Max Sequence Length | ∞ tokens |

| Output Dimension | 512 (with Matryoshka truncation down to 32D!) |

| Similarity Function | Cosine Similarity |

| Language | English |

| License | Apache 2.0 |

SentenceTransformer(

(0): SSE(

(embedding): EmbeddingBag(30522, 512, mode='mean')

(dyt): SeparableDyT()

)

)

🩵 Mathematical formulations 🩵

Dynamic Tanh Normalization (DyT) enables magnitude-adaptive gradient flow for static embeddings. For input dimension x, DyT computes with learnable parameters. The gradient of x is:

For saturated dimensions |x| > 1 yields exponential decay suppressing gradients as For non-saturated dimensions |x| << 1 , preserves near-constant gradients This magnitude-dependent gating attenuates learning signals from noisy, large-magnitude dimensions while maintaining full gradient flow for stable, informative dimensions—providing implicit regularization that enhances generalization without explicit hyperparameters.

🩵 Evaluation Results (NanoBEIR) 🩵

| Dataset | NDCG@10 | MRR@10 | MAP@100 |

|---|---|---|---|

| NanoBEIR Mean | 0.5124 ✨ | 0.5640 | 0.4317 |

| NanoClimateFEVER | 0.2998 | 0.3611 | 0.2344 |

| NanoDBPedia | 0.5493 | 0.7492 | 0.4247 |

| NanoFEVER | 0.6808 | 0.6318 | 0.6105 |

| NanoFiQA2018 | 0.3744 | 0.4197 | 0.3162 |

| NanoHotpotQA | 0.7021 | 0.7679 | 0.6273 |

| NanoMSMARCO | 0.4132 | 0.3537 | 0.3733 |

| NanoNFCorpus | 0.2982 | 0.4889 | 0.1091 |

| NanoNQ | 0.4652 | 0.3992 | 0.4028 |

| NanoQuoraRetrieval | 0.9094 ✨ | 0.9122 | 0.8847 |

| NanoSCIDOCS | 0.3381 | 0.5509 | 0.2604 |

| NanoArguAna | 0.4105 | 0.3193 | 0.3325 |

| NanoSciFact | 0.6176 | 0.5933 | 0.5824 |

| NanoTouche2020 | 0.6029 | 0.7852 | 0.4539 |

Top performance on community-based retrieval (Quora) and scientific fact verification!

🩵 How to use? 🩵

import torch

from sentence_transformers import SentenceTransformer

# load (remote code enabled)

model = SentenceTransformer(

"RikkaBotan/stable-static-embedding-fast-retrieval-mrl-en",

trust_remote_code=True,

device="cuda" if torch.cuda.is_available() else "cpu",

)

# inference

sentences = [

"Stable Static embedding is interesting.",

"SSE works without attention."

]

with torch.no_grad():

embeddings = model.encode(

sentences,

convert_to_tensor=True,

normalize_embeddings=True,

batch_size=32

)

# cosine similarity

# cosine_sim = embeddings[0] @ embeddings[1].T

cosine_sim = model.similarity(embeddings, embeddings)

print("embeddings shape:", embeddings.shape)

print("cosine similarity matrix:")

print(cosine_sim)

🩵 Retrieval usage 🩵

import torch

from sentence_transformers import SentenceTransformer

# load (remote code enabled)

model = SentenceTransformer(

"RikkaBotan/stable-static-embedding-fast-retrieval-mrl-en",

trust_remote_code=True,

device="cuda" if torch.cuda.is_available() else "cpu",

)

# inference

query = "What is Stable Static Embedding?"

sentences = [

"SSE: Stable Static embedding works without attention.",

"Stable Static Embedding is a fast embedding method designed for retrieval tasks.",

"Static embeddings are often compared with transformer-based sentence encoders.",

"I cooked pasta last night while listening to jazz music.",

"Large language models are commonly trained using next-token prediction objectives.",

"Instruction tuning improves the ability of LLMs to follow human-written prompts.",

]

with torch.no_grad():

embeddings = model.encode(

[query] + sentences,

convert_to_tensor=True,

normalize_embeddings=True,

batch_size=32

)

print("embeddings shape:", embeddings.shape)

# cosine similarity

similarities = model.similarity(embeddings[0], embeddings[1:])

for i, similarity in enumerate(similarities[0].tolist()):

print(f"{similarity:.05f}: {sentences[i]}")

🩵 Training Hyperparameters 🩵

Non-Default Hyperparameters

eval_strategy: stepsper_device_train_batch_size: 512gradient_accumulation_steps: 8learning_rate: 0.1adam_beta2: 0.9999adam_epsilon: 1e-10num_train_epochs: 1lr_scheduler_type: cosinewarmup_ratio: 0.1bf16: Truedataloader_num_workers: 4batch_sampler: no_duplicates

🩵 Training Datasets 🩵

We learned from 14 datasets:

| Dataset |

|---|

squad |

trivia_qa |

allnli |

pubmedqa |

hotpotqa |

miracl |

mr_tydi |

msmarco |

msmarco_10m |

msmarco_hard |

mldr |

s2orc |

swim_ir |

paq |

nq |

scidocs |

All trained with MatryoshkaLoss — learning representations at multiple scales like Russian nesting dolls!

🩵 Training results 🩵

🩵 About me 🩵

Japanese independent researcher having shy and pampered personality. Twin-tail hair is a charm point. Interested in nlp. Usually using python and C.

X(Twitter): https://twitter.com/peony__snow

🩵 Acknowledgements 🩵

The author acknowledge the support of Saldra, Witness and Lumina Logic Minds for providing computational resources used in this work.

I thank the developers of sentence-transformers, python and pytorch.

I thank all the researchers for their efforts to date.

I thank Japan's high standard of education.

And most of all, thank you for your interest in this repository.

🩵 Citation 🩵

BibTeX

Sentence Transformers

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing",

month = "11",

year = "2019",

publisher = "Association for Computational Linguistics",

url = "https://arxiv.org/abs/1908.10084",

}

MatryoshkaLoss

@misc{kusupati2024matryoshka,

title={Matryoshka Representation Learning},

author={Aditya Kusupati and Gantavya Bhatt and Aniket Rege and Matthew Wallingford and Aditya Sinha and Vivek Ramanujan and William Howard-Snyder and Kaifeng Chen and Sham Kakade and Prateek Jain and Ali Farhadi},

year={2024},

eprint={2205.13147},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

MultipleNegativesRankingLoss

@misc{henderson2017efficient,

title={Efficient Natural Language Response Suggestion for Smart Reply},

author={Matthew Henderson and Rami Al-Rfou and Brian Strope and Yun-hsuan Sung and Laszlo Lukacs and Ruiqi Guo and Sanjiv Kumar and Balint Miklos and Ray Kurzweil},

year={2017},

eprint={1705.00652},

archivePrefix={arXiv},

primaryClass={cs.CL}

}